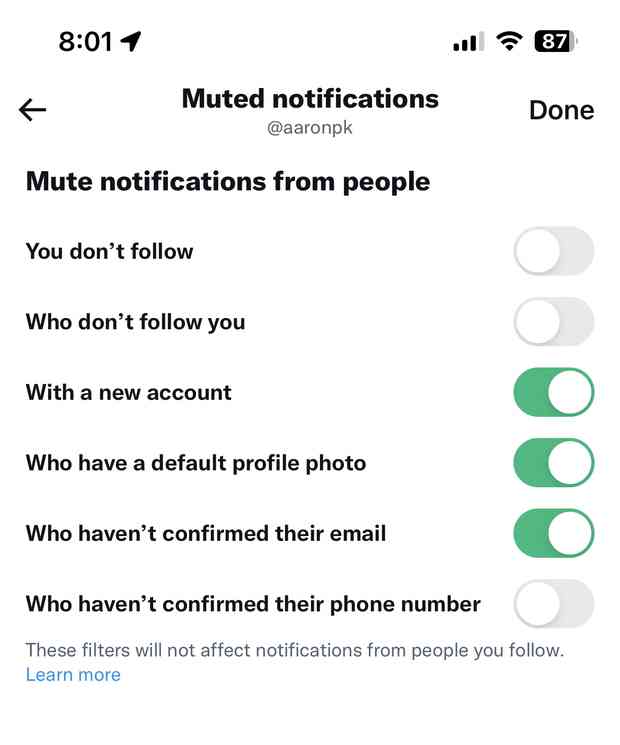

Now that legacy verified checkmarks are gone, this is a great time to make sure you have a link to your website in your Twitter bio and that your website links back to your Twitter account!

https://aaronparecki.com/elsewhere/

WeChat ID

aaronpk_tv